The More We Use AI, The More We Forget.

And why that isn’t AI’s fault.

Part 2 of a series — Builds on “Friction and the Discipline of Thinking” (Feb 14, 2026)

Wilson Enrique Fujinaga · UX Researcher & Design Strategist · willeauxbeeThe Thought That Got Away

I was mid-conversation today. Ideas flowing, momentum building, when a thought arrived that felt important, the kind that doesn’t just land a point in an argument, but sharpens its edge. And then it was gone.Not because I’m forgetful, but because the speed of the exchange outpaced my capacity to hold it.I noted it out loud: speed loses its value when it exceeds what we can actually hold.The irony wasn’t lost on me. I was in the middle of writing an essay about AI and cognitive load, thinking about what happens when you accelerate cognition without expanding working memory, and the tool designed to help me think faster had, in that moment, made me forget faster too. Then the thought came back. Slowing down. Concentrating. Backtracking. And then, it returned. And it changed everything about what this essay needed to say.

Where We Left Off

In Part 1 of this series, I argued that frictionless environments don’t eliminate the burden of judgment; they relocate it, quietly, to the least supported actor in the system: the individual user. [1] — “Friction and the Discipline of Thinking,” W. Fujinaga, February 14, 2026The muscle of critical thinking weakens not through failure, but through ease; when systems remove hesitation, the habit of pausing to examine what you’re doing quietly disappears. If that is true, then cognition is not fixed. It is shaped over time by the tools we use, and by when we begin using them.This essay picks up where that one left off. Because if frictionless design reshapes cognition over time, the next question becomes unavoidable: what happens when you introduce the most powerful frictionless tool ever built, AI, to people who were formed in radically different cognitive environments?The answer depends entirely on who’s using it.

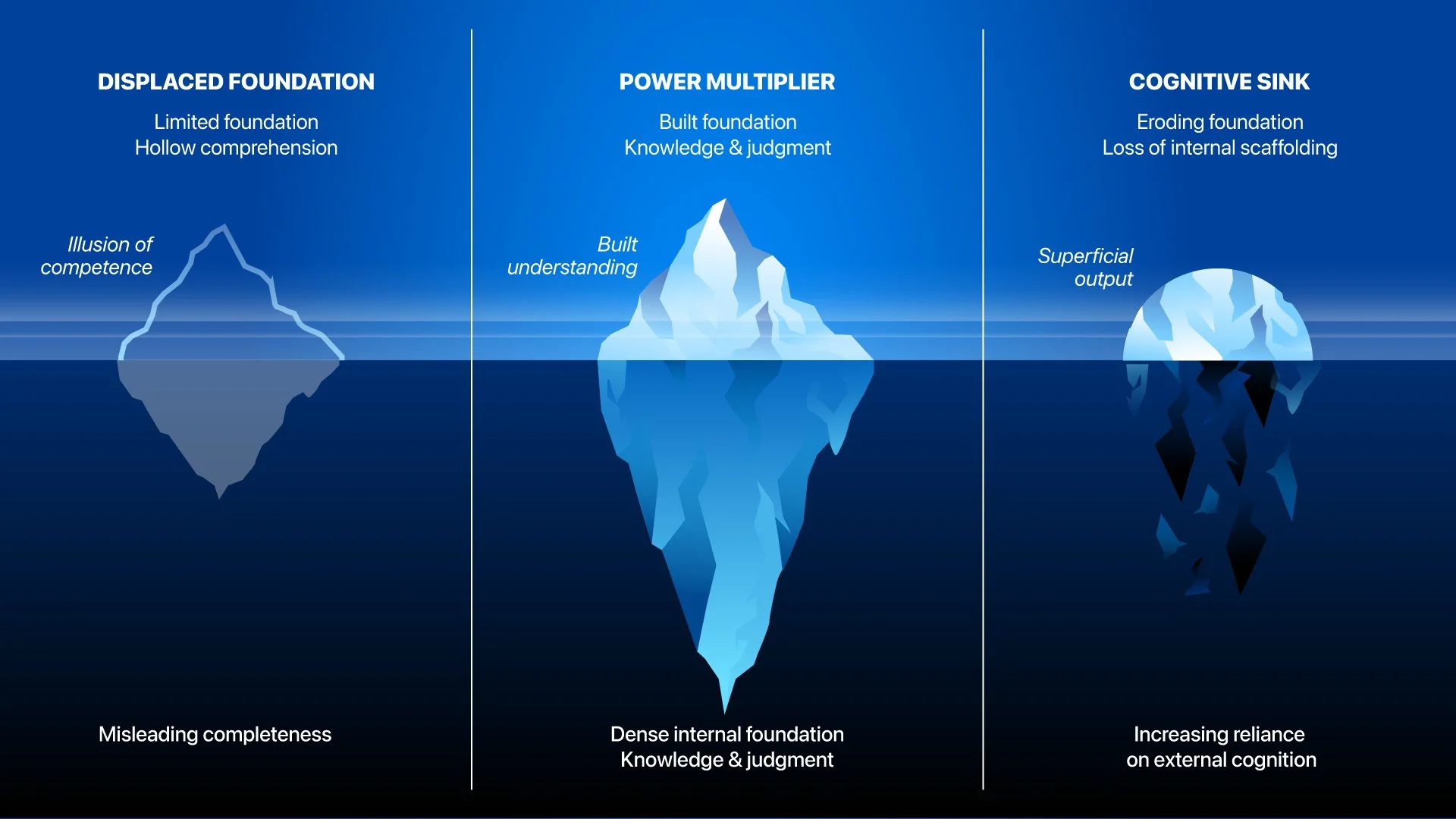

Image created by author.

We Weren’t Wrong About Social Media. We Were Just Ignored.

We have been here before.

When social media first arrived, older generations abhorred it, some still do. Not out of vague technophobia, but out of something they recognized before they could fully articulate it. In time, they saw what it was: an unregulated, infinitely scalable broadcast tool handed to the general public with no training, no guardrails, and almost no friction between thought and distribution.

We called it propaganda. We were right.

What nobody fully anticipated was the scale at which it would be weaponized, not just by bad actors, but by the architecture itself: platforms optimized for engagement, not truth, for velocity, not verification, tuned to amplify whatever was most emotionally charged, because that was what kept people there the longest. [2]

The result was an environment where the most emotionally volatile content traveled fastest, and where the line between reality and performance became genuinely difficult to locate, not because people suddenly stopped caring about truth, but because it became easier not to question, and the system rewarded attention long before it rewarded accuracy. [2]

We now have generations who grew up inside that environment, who have never known a public discourse that wasn’t mediated, accelerated, and algorithmically curated, and who are, in measurable numbers, struggling to distinguish between what is real and what is constructed for effect, or to stay within the boundaries of an argument instead of escalating beyond it.

We were not disciplined enough for a tool like social media. We have the receipts now.

This is not an argument that social media should not exist. It is an argument that the tool arrived before the population was prepared to hold it, and that we are now watching, in real time, what happens when a generation is formed inside a system that was never designed with their cognitive development in mind.

As I argued in an earlier essay, the problem isn’t just the facts. It’s also the format, where the system makes information easy to spread and harder to verify. [3]

AI is the next tool in that sequence, exponentially more capable, and while this is not the same conversation we had about social media, it carries a familiar warning. Social media spread outward over time. This is being adopted everywhere, all at once, because the infrastructure is already in place. There are no guardrails for how this tool is being adopted.

The difference this time is not the stakes, but whether we were prepared to hold a tool like this in the first place.

The Dangerous AI User, In the Best Sense

Those formed before the internet did not adopt AI immediately; they watched, they questioned, they resisted, not out of fear exactly, but out of a deeply ingrained habit of verifying before trusting, the same instinct that made them skeptical of social media and late to every “next big thing” that arrived without a manual.

That caution turns out to be exactly the right posture for a tool this powerful. Research confirms it: older generations express heightened concerns about over-reliance on AI and emphasize the need for verification and guidelines before broad adoption, while younger cohorts embrace AI tools with optimism and minimal friction. [4]

Now, having crossed over, they bring something rare to AI: a reservoir of accumulated knowledge, process experience, and hard-won judgment, combined with a tool that can execute at a scale and speed they never had access to before.

The combination is formidable, not because they let AI run freely, but precisely because they don’t.

They monitor. They audit. They catch what a less experienced eye would miss, or never think to look for, and they know when the output is wrong because they have produced the correct version manually, sometimes for decades.

The patience to verify is not a personality trait. It is generational infrastructure.

AI is only as good as the hand that holds it. And not every hand was trained the same way.

This is AI as a power multiplier, not AI as a replacement; the distinction matters, because a multiplier requires something to multiply, and those who built their cognitive muscle before the scaffolding arrived have exactly that.

That doesn’t mean this group is uniformly wise or immune to distortion; it means, more quietly, that they have a felt sense of what it is like to think without the tool, and that this memory becomes a kind of internal safety rail when the tool starts to suggest it can think for them.

Can We Say the Same for… ?

This question requires care. It is not an indictment.

Those formed inside fully networked, always-on, algorithmically curated environments process faster, wider, and across more simultaneous inputs than any prior generation.

They are adaptive and capable of synthesizing information at remarkable speed. That is real capability.

But speed and depth operate on different axes.

The question is not whether they are intelligent. It is whether the cognitive foundation was fully in place before reasoning was routinely externalized.

When you have always had the answer available instantly, do you develop the same tolerance for sitting inside the question, for holding uncertainty long enough that it reorganizes into understanding instead of moving immediately to something easier?

When the algorithm has always curated your information environment, do you build the same instinct for detecting distortion, or does the stream feel so natural that the idea of stepping outside it to check its shape never quite occurs?

When social media shaped your earliest understanding of social reality, do you have the external reference point that allows you to feel when something is off? Or does the performance become so tightly intertwined with reality that separating them feels less like discernment and more like something unnatural?

These are not rhetorical questions. They are open ones.

A Byproduct of Our Era

They are not at fault. That matters and it needs to be said plainly.

What we are watching in those formed entirely inside networked, always-on environments is not a character failure. It is a formation gap. The predictable outcome of two contradictory failures happening simultaneously.

Above, overcorrection. They were told they could do everything, achieve everything, be anything. That discomfort was a sign something had gone wrong rather than a sign something was being built. Protection often replaced exposure to consequence.

Below, a different kind of absence. Not the latchkey kind. Physical solitude with clear stakes and real responsibility. This absence is more diffuse. Surrounded by devices, platforms, and an endless current of curated content, but without stable structures to interpret it. Connected to everything. Anchored to very little. Their friction is social/emotional rather than physical/logistical.

We were left alone with the world. They were left alone inside a simulation of it.

Their assertiveness you see, the declarative conviction, the resistance to interruption, is not arrogance. It is the appearance of confidence formed in environments where feedback loops are immediate, visible, and often reinforcing. And that appearance, however strong, is not the same as an internally constructed foundation.

They were not failed by neglect exactly. They were shaped by a different kind of absence. The kind that looks like presence.

These questions matter because AI, unlike social media, does not just distribute information, it generates it, it reasons, it recommends, it writes. The cognitive demand it places on the user, especially the demand to question what appears already coherent, is of a different kind entirely.

AI is impacting people differently. Not because the tool is different. But because the people are. [5]

Image by author.

It’s Not AI’s Fault

AI did not decide to make us forget. Social media did not decide to flatten public discourse. These tools do not have intentions. They have architectures. And architectures shape behavior, reliably and quietly or not, at scale. One edge case after another.

The question has never been whether the tools are dangerous. It is whether the people using them are prepared. Whether they were formed with enough friction, enough boredom, enough uncertainty, enough figuring-it-out-alone, to have something to stand on when the tool tries to do the standing for them.

In Part 1 of this series, I argued that to remove friction entirely is not to humanize technology, but to flatten both technology and us. [1] In Part 2, I look more closely at how formation shapes cognition. What I am describing here is not abstract. It is observable. I am watching people who were once adamantly opposed to AI begin to adopt it in constrained ways, using it to refine emails, structure thoughts, and produce more polished output than they would on their own. The effort shifts. The thought is still being formed, but instead of working through multiple rounds to refine it, people move into an editorial role, shaping output that arrives already structured. The process becomes more efficient.

At the same time, I am seeing younger users approach the tool differently, prompting and accepting output with little verification, treating the response itself as sufficient. The contrast is not about intelligence. It is about formation.

The tool is the same. The way it is used is not.

This essay is the corollary. The people least flattened by frictionless design are those who were fully formed before it arrived.

We weren’t wrong about social media. We just got here first.

References

Author’s Published Work

[1] Fujinaga, W.E. “Friction and the Discipline of Thinking.” Medium, February 14, 2026. https://medium.com/@wilsonenriquefujinaga/friction-and-the-discipline-of-thinking-9a1d9a1b9cfb

[2] Fujinaga, W.E. “The Social Media Crisis Is Not About Facts. It’s About Format.” Medium, March 2,2026. https://medium.com/@wilsonenriquefujinaga/the-social-media-crisis-is-not-about-facts-its-about-format-29f184d811d8

[3] Fujinaga, W.E. “Making Structure Machine-Readable.” Muzli Design Inspiration, Medium, 2026. https://medium.com/muzli-design-inspiration/part-2-making-structure-machine-readable-be469d6d0209

External Sources

[4] Chan, C.K.Y. & Lee, K.K.W. “The AI Generation Gap: Are Gen Z Students More Interested in Adopting Generative AI Than Their Gen X and Millennial Generation Teachers?” Smart Learning Environments, Springer Nature, November 2023. https://link.springer.com/article/10.1186/s40561-023-002693

[5] Gerlich, M. “AI Tools in Society: Impacts on Cognitive Offloading and the Future of Critical Thinking.” Societies, MDPI, 15(1), 6. January 2025. https://doi.org/10.3390/soc15010006

Note: References [1–3] are works by the author reflecting a developing body of work on friction, format, and cognitive formation. References [4–5] are peer-reviewed external sources that corroborate the essay’s core arguments.