The Social Media Crisis Is Not About Facts. It’s About Format.

How might we make credibility visible when everything is styled to feel true?

Social media platforms now function as de facto news distributors. The crisis in our feeds is not simply that posts are lies. It’s that they are mixtures of truth and distortion.

Some countries attempt to solve this through credential mandates. Others rely on labeling systems or content removal. But these measures still focus on content or identity. The structural incentives remain untouched.

What we are facing is something different. Some truths have been remodeled into bingeable stories, optimized for reach rather than clarity.

Scroll any platform and the pattern repeats. Posts styled like journalism, health advice, or psychological self-tests appear with breathless headlines, confident tone, a chart or two, and now AI-generated images that are difficult to distinguish from authentic ones. They read like a mashup of breaking news, insightful theory, and personal diary. Knowledgeable. Affirming. Sometimes answering a question you hadn’t yet articulated.

The method is simple: a few real facts woven together with speculation, outrage, and cliffhanger storytelling.

The result is not just misinformation. It is anyone-produced infotainment, packaged to resemble evidence.

Misinformation is not new. Twentieth-century media theorists long argued that the structure of a medium shapes how information is perceived. McLuhan’s observation that “the medium is the message,”¹ Postman’s warning about entertainment-driven discourse,² and Ellul’s analysis of propaganda³ all point to the same insight: manipulation often operates through interpretive framing rather than outright falsehood. Today’s platforms intensify that dynamic through algorithmic ranking and interface design,⁴ reinforcing how technical architecture governs informational flow.⁵

What has changed is not human susceptibility, but the scale and structural incentives of distribution.

Most policy debates still treat this as a content problem. If a post contains a false claim, platforms should label it, throttle it, or remove it. That sounds reasonable until you ask who decides what is false when it is embedded within otherwise accurate reporting, and under what political pressure. Such systems quickly slide into overreach or inconsistency. Meanwhile, automated accounts and coordinated networks generate content faster than human or hybrid fact-checkers can evaluate it, a dynamic well documented in research on misinformation spread and amplification speed.⁶ Even specialized reviewers in public health, policy, or governance struggle to keep pace with the velocity and layered rhetoric of real-time political communication.

There is another way to think about this crisis.

What if the danger lies less in what posts say than in the narrative format they use?

Truth in everyday life is usually simple. “The bridge is closed.” “The drug failed its trial.” “The baby is sleeping.” These statements are compact and direct. They point to something outside themselves: a public notice, a published study, a warning to be quiet. They do not require dramatic buildup. There is no villain. No hidden plot. No emotional arc. They either match the moment or they do not. If they are wrong, you can check. They do not try to overwhelm or seduce. They state a condition.

Manipulation works differently. And often, we don’t recognize it as manipulation.

To make a weak claim feel solid, you wrap it in a story. Introduce villains and heroes. Suggest secrets and revelations. Add screenshots and anecdotes. Layer in fear, anger, envy, desire, even something outlandish. A few verifiable facts inside a structured narrative can carry a great deal of speculative baggage. The weaker the core claim, the more scaffolding it often needs.

Social media is finely tuned to reward that scaffolding. The platform is built for speed. For scroll. For impulse. It is not built for sustained attention.

Dramatic threads generate more engagement than matter-of-fact statements. Emotion travels faster than documentation. Posts promising hidden knowledge are more clickable than modest explanations. As a result, platforms amplify content that blends fact and fiction inside an entertaining frame.

The system is built to reward what spreads.

Posts that trigger strong reactions move faster than posts that require reflection. Speed is measured. Engagement is measured. Structural rigor is not. The labor behind verified work is largely invisible, while performance is instantly rewarded. In that environment, content that blends truth and distortion is more likely to travel than content that simply informs.

If the problem is structural, the response to it must also be structural.

Automated and hybrid fact-checking systems are designed to evaluate discrete claims inside a post. They rely on machine learning classifiers, verification partners, and user reports to compare assertions against known records or prior debunks. When a claim matches an existing misinformation pattern, it can be labeled or down-ranked.

But this model assumes misinformation behaves like a sentence. A single bad word. A lurid image.

In practice, misinformation behaves like a well-constructed story.

When falsehoods are woven into true details, especially through implication and emotional framing rather than crisp, falsifiable statements, systems struggle to isolate them. A post may contain only technically accurate sentences while still guiding readers toward a misleading conclusion.

Fact-checking systems are built to test assertions. They are not built to test narrative structure. We’ve grown better at spotting synthetic tone, like AI-generated sentences. Yet we remain vulnerable to stories that blend truth and distortion into coherent emotional arcs. Surface detection is not structural literacy.

That mismatch creates the vulnerability we are living in.

Government pressure to remove false content introduces another risk: politicized information control and disputes over who decides what qualifies as false. Whether the intervention is labeling or regulatory takedown, both remain focused on the wrong element. Neither addresses the incentive structure that rewards convoluted storytelling in the first place.

Instead of trying to arbitrate truth, platforms and regulators should govern structure.

The question should shift from “Is this claim true?” to “How is this claim being positioned?”

Posts that imitate journalism should meet basic claim standards. Factual assertions should be supported by documented sourcing, displayed directly or maintained as verifiable documentation. Fact, opinion, and speculation should be clearly separated. Interviews and research should not be implied. They should exist.

If accounts want the reach and credibility benefits of presenting information as authoritative, they should accept the responsibilities that come with it.

Authority is not something you can cosplay.

Ranking systems should stop rewarding high-drama narratives that offer little verifiable substance. A post making twenty serious claims and offering zero sources should not receive the same algorithmic boost as a concise, well-documented explanation simply because it comes from an influential personality, media figure, or viral brand. Structural clarity should function as a quality signal.

The core disinformation mechanic is not the post. It is the frictionless share. The share button can make users unwitting participants in misinformation long after they have moved on. Attention is finite. Replication is not.

Users deserve tools that reveal the skeleton of what they are about to consume. Imagine an information nutrition label on viral posts: number of factual claims, number of sources, sentiment intensity, prior corrections. You could still share what you like. But the architecture would be visible rather than hidden behind aesthetics or popularity.

None of this requires a Ministry of Truth. Governments can require transparency and auditing of ranking systems without dictating viewpoints. Platforms already regulate structure in other domains. They enforce spam formats. They design friction for malware. They block coordinated manipulation patterns. Yet when posts simulate authority without accountability, structural standards disappear.

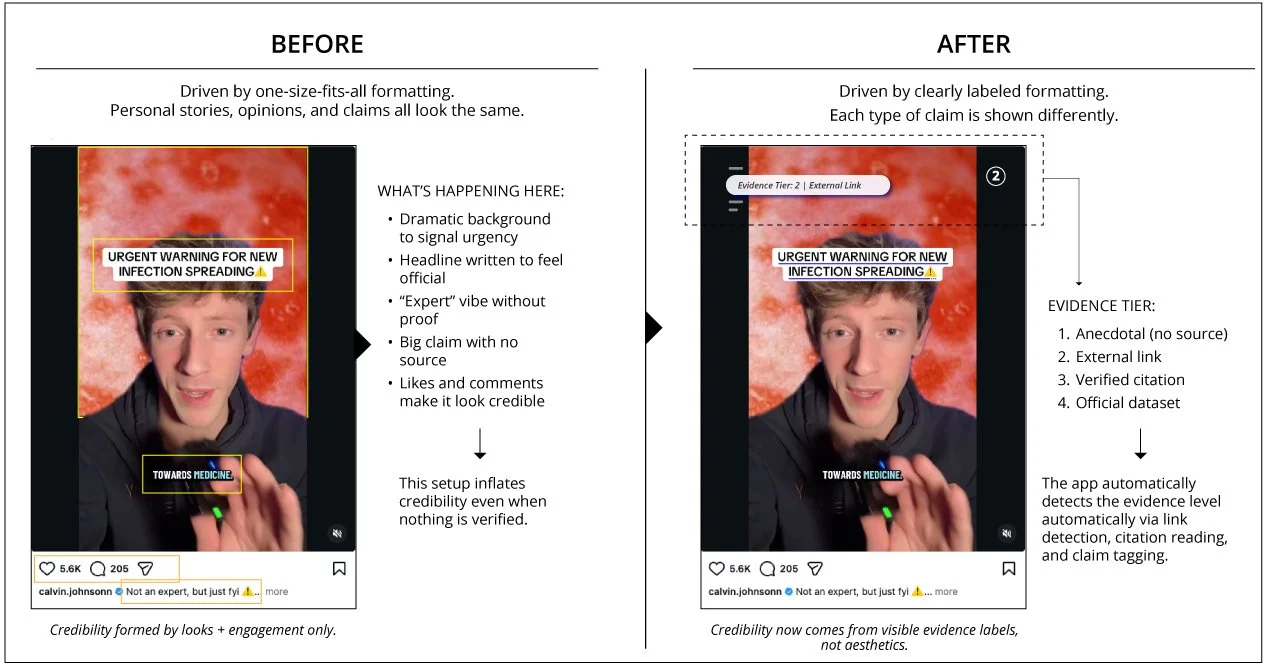

Same video. Same creator. Two different outcomes. The difference is structure — not content.

Structural Guardrails

Visible Claim Breakdown

Factual posts should show the basics: how many claims are being made, how many sources back them, and whether those sources are primary or secondhand.Clear Lines Between Fact and Commentary

Readers shouldn’t have to decode tone. Formatting should make it obvious where reporting ends and where interpretation begins.Evidence for Any Post That “Looks” Authoritative

If a post adopts the look of journalism, analysis, or expertise, it should link to something verifiable. Authority should be earned, not implied.Rigor That Actually Matters in Ranking

Quality signals like sourcing, transparent methods, and correction history should give posts a lift, not sit there as decorative metadata.Corrections That Travel With the Post

If something changes or gets updated, the revision history should remain visible. Accountability shouldn’t disappear as the post spreads.Gentle Friction for Fast-Moving, Unsourced Claims

When a post with no visible evidence goes viral, the platform should introduce a moment of friction — a pause long enough to slow reflexive sharing.Reposts Without Added Clarity Should Downshift Over Time

Content that’s repeatedly reshared without new sourcing or context shouldn’t rise in visibility just because it’s moving quickly.Ranking Logic That Isn’t a Secret

Platforms should clearly explain which structural signals influence reach. If sourcing or corrections matter, users should be able to see why and how. Systems that shape public knowledge shouldn’t operate behind glass.

The information crisis is often framed as a war over facts.

It is a war over format.

That’s manageable.

As long as manipulative narratives enjoy the most structurally advantageous position in our feeds, no amount of after-the-fact correction will stabilize the system.

Truth does not need to be boring.

This is not a call for institutional gatekeeping. Requiring professional accreditation before someone can speak would merely exchange one crisis for another, privileging established power over independent rigor. The burden should fall on the structure of the argument itself. A licensed professional can be negligent. A citizen journalist can be forthright.

The platform should not care who is speaking. It should care about the integrity of the structure. If a post adopts the aesthetic of authority, it must bear the structural weight of evidence.

The solution is not to police expression. It is to ensure that structural credibility is not counterfeited by format alone.

Appendix

Evidence Tier Guide (Quick Reference)

Tier 1 — Anecdotal

Personal observations or lived experience without any source attached.

Examples: “This happened to me,” “People I know are getting sick.”

Tier 2 — External Link

A post that includes a link to an outside article, blog, or website, but not a primary source.

Examples: news articles, commentary pieces, summaries.

Tier 3 — Verified Citation

A post supported by a verifiable source such as a research paper, government document, or official report.

Examples: DOI links, formal citations, named institutions with searchable reports.

Tier 4 — Institutional Dataset

Claims supported by structured, authoritative data from organizations that publish raw or open datasets.

Examples: CDC, WHO, U.S. Census, national statistical bureaus.

Notes & Sources

OpenAI, Model Spec — https://model-spec.openai.com

EBSCO Research Starters, “Media Manipulation” — https://www.ebsco.com/research-starters/social-sciences-and-humanities/media-manipulation

SAGE Journals — https://journals.sagepub.com/doi/10.1177/29768624251367199

Proceedings of the National Academy of Sciences (PNAS) — https://www.pnas.org/doi/10.1073/pnas.1914085117

Carnegie Endowment for International Peace, “Countering Disinformation Effectively: An Evidence-Based Policy Guide” — https://carnegieendowment.org/research/2024/01/countering-disinformation-effectively-an-evidence-based-policy-guide

National Center for Biotechnology Information (PMC). https://pmc.ncbi.nlm.nih.gov/articles/PMC11894805/